The Problem: Wasting Class Time Reviewing Trigonometry

Last year the Advanced Algebra II kids did a boatload of trigonometry, and this year I had to make sure my kids had a strong grasp of the basics (it’s been ages since they’d seen it) before we delved into trigonometry this year in Advanced Precalculus. In previous years, I always did a “trig review unit” which I always felt like wasted time. I like to use classtime to give kids things where they have to rely on each other — but during the review unit, kids didn’t need each other much. Different kids needed to review different things. I found ways around it, but overall, it felt like wasted time.

The Solution: Mix Review A Little Each Night During the Prior Unit (Which Posed Another Problem…)

So the other teacher and I decided that while we were working on sequences and series, we would also give kids some basic trig questions each night, maybe 10-15 minutes worth. Although I can’t see myself using this curriculum in my classroom the teach the material for the first time, I really love eMathInstruction’s packets. They are well-thought-out, and their problems highlight drawing connections among tables, graphs, and equations, and they often give forwards and backwards problems.

So each night I gave a selection of problems from one or two of these lessons — all topics they had worked on last year — and had kids do them. I chose the problems and lessons based on the specific things I needed kids to remember for what we were doing this year. And then the next day, I gave the answers and let kids resolve any difficulties.

But I wanted to be thoughtful about this. It was review, but I needed to make sure that kids really had these basics down before we jumped with both feet into the depths of trigonometry. And remember, all of this was happening during a unit on sequences and series. And I was afraid without some sort of feedback mechanism, I was going to finish this review and find that kids didn’t interalize any of it, or regain the fluency with trig basics that they had last year. So I worked with another teacher (who has been acting as my “teacher coach” this year) to circumvent this problem.

The Solution To The Problem My Solution Generated: Short Daily Feedback Quizzes

This is how it worked…

Let’s say on Friday students were asked to complete review problems from Lessons #1 and #2 from the review packet. Then on Monday, I give each group the answers, have them check their own work and talk with their group to resolve any difficulties (and if that doesn’t work, ask me!), and then the rest of the lesson is continuing on with sequences and series.

Then on Tuesday, we’d start class with a short 3-5 minute check-in super basic quiz on the trig review that was due on Monday and we had already gone over. It might look like this:

The back of the quiz would look like this (kids flip to the back when they’re done):

Then we have the rest of the class on Tuesday, consisting of going over the new trig review answers for a few minutes, and then working on sequences and series.

Then we have the rest of the class on Tuesday, consisting of going over the new trig review answers for a few minutes, and then working on sequences and series.

Tuesday night, I’ll mark up the quizzes. They are worth a whopping total of 1 assessment point (most of my assessments are 30-40 points). But here’s the catch: the score is either a 0 or a 1. To get the point, you need to get all parts correct. I’m okay with that because this is an advanced class and these questions are super basic. This is feedback for the student: do they really know the basic material, or do they merely think they know the basic material? [1]

On Wednesday, we’d start class with a short basic quiz on the review trig material we went over on Tuesday, kids would get their quizzes from Monday, and we’d go over the review trig material due today (before continuing on with sequences and series).

A note about timing… Most of our classes are 50 minutes. So about 4 minutes were spent taking the brief quiz, about 5-8 minutes were spent going over the trig review work and resolving any difficulties, and the remaining time was spent on the current unit of sequences and series.

At the very end of all the trig review, I had a mini-assessment on all the trig review material.

Framing The Quizzes

When presenting the daily quizzes to students, I expected a lot of groans. Thankfully I didn’t get any audible ones, which I attitribute to taking my time framing the plan for them. I wanted them to understand the thinking and impetus behind this approach to the review material. I wanted to be transparent.

First, I acknowledged that it was a long time ago (last year!) that they had worked on trig. So it would be unfair of me to expect them to know things like or how to graph

immediately. I wanted us to build up to it, slowly, so they had time to practice and get feedback. It was my job to make sure that before we resumed trig that they had refreshed themselves with the basics.

Second, I talked them through the idea behind the daily quizzes. I made sure that kids understood they would be short and only on material they had reviewed and had time to practice first. I highlighted that the quizzes served three purposes.

- That they were low-stakes feedback for you on what you truly know and what you don’t know.

- They will provide specific places for additional help if you find you don’t know something.

- They were feedback for me on what y’all are good with and what you need work with — so I know to talk publicly about anything I’m noticing the whole class needs help with.

I did mention the grading, but I didn’t put much emphasis on that. The score wasn’t super important, except as feedback.

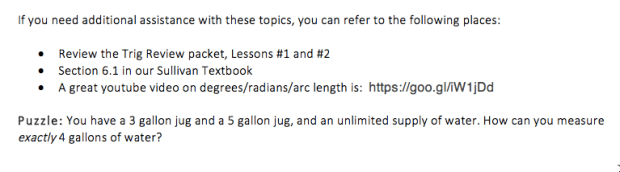

You might have noticed that the back of the quizzes gave specific places for students to get help with the concepts/ideas on the quiz. This was an idea my teacher coach and I generated together. The conversation we had was about feedback in general. We teachers can be good about giving feedback, but we never teach students explicitly how to use that feedback. What do they do with it? By providing specific resources/places for kids to go to get additional help (along with their teacher and classmates, of course), we thought this might highlight that we really do want these quizzes to be part of a feedback loop.

The Feedback

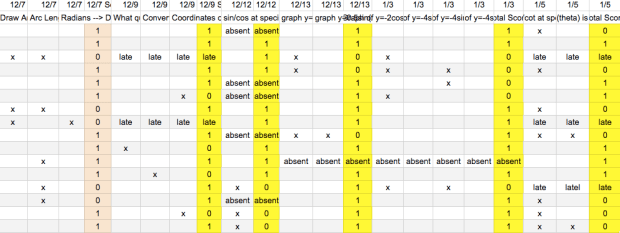

I took data on the quizzes. You’ll note that before each 1 or 0 are a few columns. Those are the concepts being asked. An “x” means that the student got that part incorrect. That data helped me look for trends, and what was more challenging for students, so I knew if I had to explicitly talk about any concept/idea in class.

I was planning on also using this feedback later. I was going to look at the assessment kids took at the end of the review, and see if there was any relationship between kids’ performance on the assessment and these feedback quizzes. I didn’t get a chance to do this, and truth be told, the average was so high for the review assessment (89%) I suspect it would have been a waste of time.

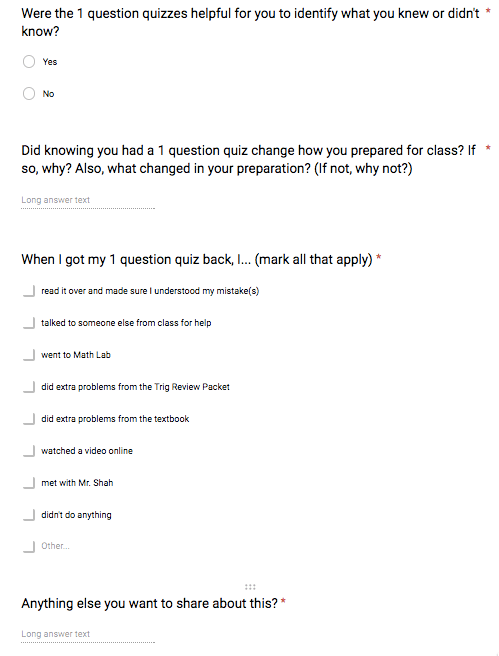

I also wanted to know how students felt about this process. This was an experiment for me, but to know if it succeeded, I needed feedback from students. I wanted to know (a) if they found the feedback quizzes were helpful, (b) if the feedback quizzes changed their practice in any way, and (c) if they used the feedback from the quizzes in any way. So my teacher coach and I wrote this short and pointed set of questions for them:

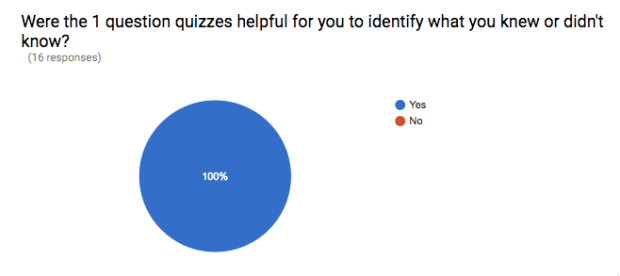

The results were interesting.

When asked if their preparation changed or not, it was interesting. Most kids said their preparation did change a bit, but even kids who said that it didn’t would then go on to say something that indicated that their preparation did change (highlighted in red)!

This is what kids did with the feedback (see list above from survey to see what each bar corresponds with):

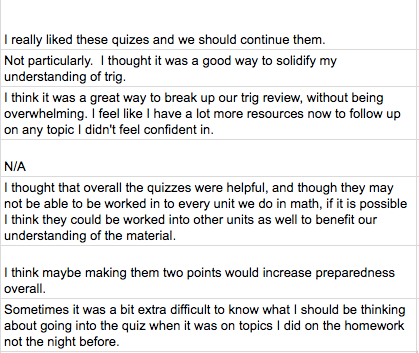

And finally, here’s what kids wrote in the “anything else” box:

Overall, a success! Not only because kids found them useful on the whole, and because their practice changed because of them (for the better), but also because they did quite well on the trig review assessment (as I noted above, earning an 89% average).

More than figuring out how to deal with the annoying “how to review trig from the previous year before starting on trig in the following year” problem, this whole enterprise was an interesting excursion into feedback for me. I was hoping to find a way to create a feedback loop that was doable (and this was! it only took 10 minutes each day to mark up the simple quizzes) and created a change in student practice (which it did, because knowing there was something small students were accountable for each day changed how most kids prepared just a little bit).

To me this post and this experiment isn’t really about trig, but about now having another tool (daily feedback quizzes) in my teacher toolbelt to pull out at appropriate times.

[1] I debated whether I wanted to put a grade on these at all, or just let them be feedback with no score attached. I went back and forth about this for a long while. But ultimately, I knew that attaching a score, no matter how minimal, to the quizzes would effect more change than if I didn’t. But after introducing it, I didn’t mention the grade/score once when talking about them. I would mention common mistakes I noted and talked about ways to get extra practice with something or another. I kept my focus on the notion of feedback, and doing something with that feedback.

Anyway, your post revolves around your system of bite-sized, 1-point reviews and the benefits you’ve found when using them. You said you wanted to find a feedback loop that was doable and changed student practice. You also wanted to be able to continue moving forward with the curriculum at the same time you were reviewing. And your system accomplished that by:

a) Chunking the material into bite-sized bits that were distributed across many days; and

b) Being very manageable, time-wise, for you to create and grade.

The fact that the amount of content was bite-sized made the goal of each review quiz not only perceivable by students, but doable from a preparation standpoint. Students knew what to do and figured they could do it. So they did it. They spent a few extra minutes each day looking at the material and practicing it a bit more. This resulted in more learning, period.

The method that I have developed, which is called The Fill Method, streamlines this process for use over the lifetime of an entire course. Instead of being used for reviewing material from previous courses, this method reviews every lesson that is taught throughout the year two days after a student’s initial exposure. This means every day, usually as part of a students’ warm-up routine as they enter class, students take a 2-minute, 1-question quiz that asks them to perform the skill that they began learning two days prior. Over the course of a year, this means that we have a binary screening of every student’s mastery of every lesson for the whole year.

We use the information in a number of ways that have drastic effects on student learning. Firstly, we have set up daily extra help classes that run during the time of day when most students in a grade have study halls. Every student who does not answer the quiz correctly is scheduled to attend the extra help class the next day instead of going to their study hall. This means that these extra help classes are populated with every available student who missed the quiz from the previous day. The teacher in the class them redelivers the lesson, focusing on student misconceptions and just generally getting students more successful experience with the material. All students are then re-assessed and their “status” for that lesson is updated. This happens every day for every lesson. We are talking about a serious amount of additional learning.

And in addition to the systematic reteaching, the students’ behavior changes in the exact same ways you noted in your post. They regularly review the material in preparation for the daily quiz.

In the long term, the record of every lesson, which are grouped into units, is maintained for students. They can always look to see which lessons are still left to be mastered and which units have the most unmastered content. This is an incredible as they prepare for unit tests, midterms and final exams.

In addition to routing kids to immediate extra help, this provides our teachers with incredible information about their teaching performance. What are our least successful lessons and units? This is no longer a mystery. We know exactly what to spend our energy re-working together. And the best part is we can see if it worked the next year by comparing the new approach’s performance to the old.

The learning gains we have experienced as a result of using this method with students have been unfathomable (until they happened). The median of our students’ final exam scores have increased by 9 points from 84% to 93% (93% on the final exam! The middle kid!). The percentage of students who are rated proficient on our state’s assessment has gone from 39% to 75% in one year of use.

And this was just our first year. We are actually in our third full year of use and the rest of the middle schools in our district now use the method, with other district’s beginning pilots right now.

What is for sale? The software that automates the quiz creation, extra class roster generator, and nested record keeping (which students have mastered which lessons). The software is what really makes the method manageable. It would not be feasible to do by hand. You’ve got to see it. It’s so easy, so fun, and so effective. I have no interest in selling it to you. I promise.

I would love to get your opinion on it, though. Please email me and I will set you up with an account if you’re interested (I assume you can see my email being the person who runs the blog. If not, reply to this and let me know).

I just want to reiterate that this is a completely grassroots thing that we are super excited about around my school, district and region. I am just an extremely passionate teacher who made something that is having a really positive affect on kids.

Thanks for your post. You will likely be seeing more of me. I’ve decided to take the plunge into the online community :-) Thanks for listening.

And sorry about the length. I don’t know how to make it shorter!

Hihi – sorry for the late reply! I am going to shoot you an email! But I wanted to publicly thank you for writing all this!!!

THIS WAS SUPPOSED TO BE THE FIRST COMMENT OF THE SIX:

Hey Sam. My name is Harry O’Malley. I am a math teacher in the Buffalo, NY area. I am looking to start a blog and I thought it would be important to get to know the blogging community. I occasionally read Dan Meyer’s blog and very occasionally comment. I’ve known about you and your blog for a few years now (mostly because Dan mentions it), but have only visited it a few times. Of all of the blogs I’ve visited so far tonight in my first stint at finding someone to connect with, yours is by far the best.

AND THIS WAS SUPPOSED TO BE THE SECOND COMMENT OF THE SIX (THE OTHER COMMENTS ARE IN ORDER AFTER THIS, STARTING AT THE TOP):

I came here tonight simply to read your most recent post, whatever it was going to be, and comment on it, with the only intention being to connect with you. The nature of your post, though, is so related to work I’ve been doing recently that I have to bring it into the discussion. “Great”, you might be thinking, “what better way to connect with someone than to communicate with them about types of work that you are commonly engaged in!”. And this is true. It is fortunate that your post is related to work I am doing. The only reason I am making such a fuss is that my work has recently become for sale and I don’t want you to think that I am just commenting on your page to sell you something. Right hand up to the good lord I did not come here with that intention. I am actually just very interesting in starting conversations with you because I think that what you have to offer is valuable.

Hi,

Thanks for sharing this great way to review a topic without reviewing it the traditional old ways. I’m a high school math teacher in Orange County, CA and this year I’m teaching Geometry, Algebra 2, and Algebra 2 Trig. I’ve been teaching high school math for about 8 years now and the curriculum has changed so much for the lower levels at our district. I am usually spending a few days or a week reviewing topics before starting a new unit. I always lose the interest in a few students because they would either know it already and turn off, or it would be the first time they ever saw the topic and turn off. I feel like your way of reviewing for a few minutes each period will keep me from losing students.

I’m going to try your method of reviewing in all my classes because I’m finding the need to review Algebra 1 topics in all my classes more often than in the past. I really love the idea of using Google surveys because students can take them anytime outside of class and I will receive honest feedback from EVERY student.

Thank you again for sharing what you do in your classroom.

Howdy! Thanks for writing a comment – and sorry for the delayed reply. Spring break and then a crazy return from Spring Break got in the way.

I’d be interested to know if what you end up doing for reviewing ends up working, if you needed to make tweaks, or if it ended up a complete flop! If you remember, I’d love an update!